See my first article for a short review and teardown:

http://hackcorrelation.blogspot.de/2016/02/inside-stuff-racechip-car-tuning-thingie.html

First off let me start by saying I consider this fair use, as the instructions and website do not explain what the settings do, how the unit actually functions and what effects it can have. The description below refers to a single-channel Diesel engine (single common rail).

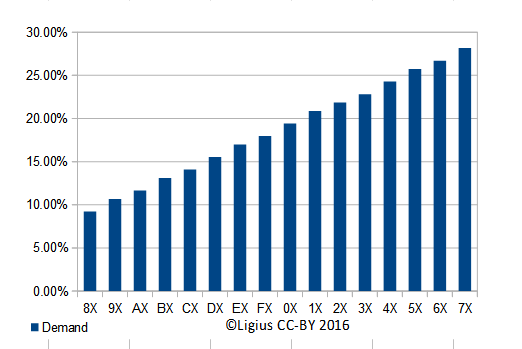

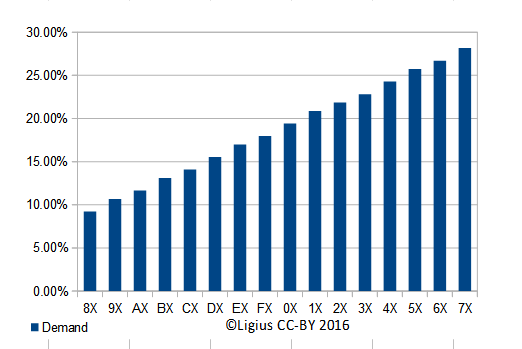

The chart above shows 4 possible settings and their effects.

The first rotary encoder controls how much 'extra power' is requested. See 9E vs. BE vs. 4E

The second rotary encoder controls the RPM high range (endpoint). See BE vs B7.

The encoders go from 8 (minimum) through 9, A, B, C, ..., F, 0, 1 ... 7 (maximum).

The second encoder will control when the RaceChip unit will cease intervention: a setting of X8 means at around 30% load (or RPM), X0 at around 60% load, X7 would perform until 100% of the RPM range. Those are just very loose numbers and will vary with every engine type.

The low-range (starting point) cannot be configured, in my case it starts from idle.

The unit will intercept the common rail pressure sensor value and will send a lower value back to the ECU (factory engine chip). This will make the ECU 'think' that the rail pressure has dropped, ordering the fuel pump to increase the pressure. That's what 'demand' or 'power increase' in the charts above means. This can or cannot translate to a real power increase, but it usually does.

The signal from that rail pressure sensor depends on many factors, but usually is proportional to the engine RPM under light driving conditions. Under heavy load or sudden acceleration the ECU will increase the pressure to (almost) 100%.

However, the ECU might order increased or decreased pressure when entering an emission cycle - warming up the engine from cold, heating the catalyst unit, purging the catalyst residue, etc. In this case Race

The first encoder will tell the unit how much to shift the signal. The shift (in voltage) is proportional to the tuning unit will interfere with this and caused increased emissions and/or increased fuel consumption. In my case it increases significantly (20-50%) the idle fuel consumption.

A setting too high will cause the engine light to come on under normal driving conditions.

The second encoder tells sets the maximum RPM or load that the unit should stop interfering. Since the offset applied to the sensor is proportional to the pressure this setting is really tied to the first one. Also, once maximum set RPM is exceeded the unit just delivers the original signal back to the ECU which might cause the latter to see a sudden 10-30% increase in rail pressure.

A setting too high will cause the engine light to come on under heavy acceleration or heavy load.

The unit provides no way of setting the lowest RPM or load or choose another curve that is not linear with sharp drops.

Most of the factory ECUs choose a different engine curve based on the rail pressure sensor so in a way it's very similar to a chip tuning that flashes new engine curves into the ECU, while still not exceeding maximum allowed settings.

First off, there should be no real danger to the engine, usually, the unit will just allow the ECU to choose another value that is already designed for the car.

An adverse effect might be that running with continued increased rail pressure could cause stress on the fuel lines that was not engineered for. This means that the lines might start leaking on old cars, the high-pressure fuel pump could wear prematurely, injectors could wear prematurely.

I don't see any way it could cause the engine to blow up, as some anecdotal reports have said.

There is a real possibility that the engine room noise will increase - the engine is working harder to make the high-pressure pump work harder. Both are the noisiest things around.

I don't see any way that a routine check will be able to detect that the unit was installed. So it's basically untraceable. However, pressure warnings - especially the ones resulting in a check engine light - might show up in the ECU log. But those can also be caused by lower-grade fuel or have other causes.

I'm more concerned about the sharp intervention of the unit, it's either on or off, there is a bit of hysteresis but no interpolation to factory defaults while outside the range. Since I don't have enough experience with this I would rate this worry as inconclusive.

Also, the fine spikes/dips of the sensor are being filtered out, in newer engines those might be used to optimize the parameters. It essentially means less information is getting through to the ECU which could mean that for example faulty or leaking injectors cannot be detected (if implemented).

Now that I know what to search for I've found several sources that discuss the pros and cons of these "diesel tuning boxes".

First read should be a scientific paper that has done all the required tests and yields about the same conclusion as my empirical testing: low-end torque is increased, fuel consumption at city speeds increases, consumption at highway speeds decreases.

http://www.ijesit.com/Volume%202/Issue%201/IJESIT201301_35.pdf

Short article: http://www.sttemtec.com/en/tuning-box/tuning-box.php

And another one: http://www.endtuning.com/dieseltuningboxes/

The latter seems to imply that fuel mileage would be false but in my experience the on-board-computer showed [almost] the same results as the actual measured consumption. As far as I know, that OBC figure is measured with the fuel flow sensors and not derived from injector turn-on time times rail pressure. YMMV (literally) but I think instant consumption is derived (so, false) while long-term consumption is measured.

Emissions are likely worsened, but I haven't seen any real test on that.

This is the meat of the stuff and what my report above is based upon.

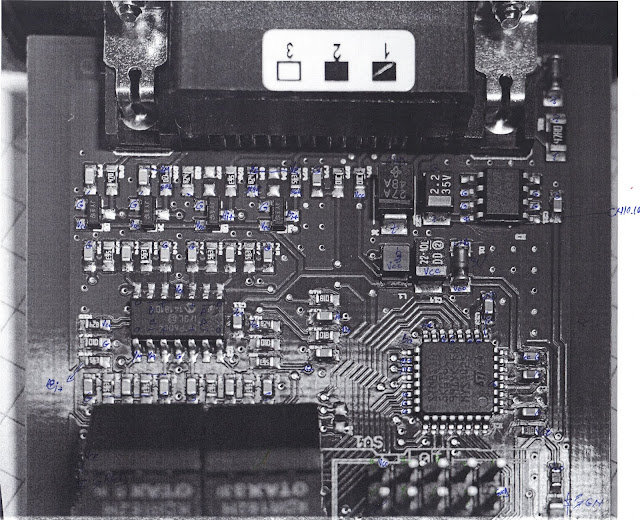

First off, a pen-annotated schematic that I've used to trace the connections:

Click to zoom in to see the annotations I'll be referring to.

G is ground, Vcc is supply voltage in (clamped to 3V), Ai+ means the positive input of the A amplifier. YF - yellow female lead, sensor input. YM - yellow male lead, unit output to ECU.

Engineering quality is good, there is plenty of protection which is good for reliability. That is mostly what you pay for in this unit. Feel free to study the image above, I will not go into more details on that.

The supply voltage (red wire, usually 5V) is clamped through the D1 Zener diode to~5V and used throughout for the microcontroller and the Microchip amplifier. Consumption is about 6mA.

There is an LM7805 in the upper right corner but I believe it's only used for programming, as it only goes to the CN10 connector in the lower right corner.

The unit can function as a 1-channel device or a 2-channel device, in my case you can see the sticker with the number '1' marked. A 2-channel device will probably have a different firmware, but it might be controlled only by the connector type.

The quad-opamp on the center-left is used in a buffer-amplifier configuration: units A and B buffer the input from the real sensor while C and D buffer the output from the microcontroller,

The [1-channel setup] flow is as follows:

Since the input is going through an RC filter there is no transient response - i.e. it does not care how many injectors there are or what they do or what the real engine RPM is.

There is a slight hysteresis implemented, of around 0.1V, but it may be just a side-effect.

At the cut-in and cut-off points, the output signal seems to converge slower - it's either a protection against an abrupt take-over or just a side-effect of the RC network or perhaps I was not loading the output enough.

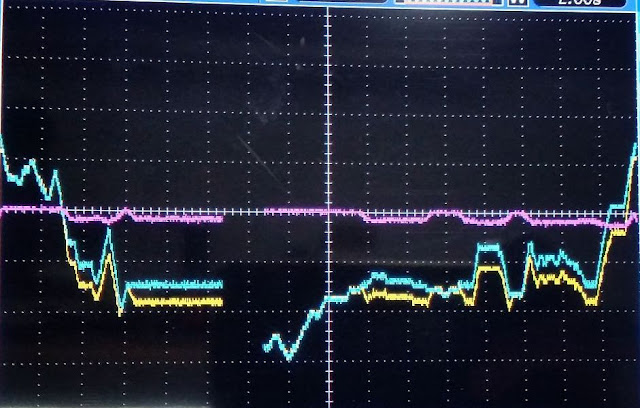

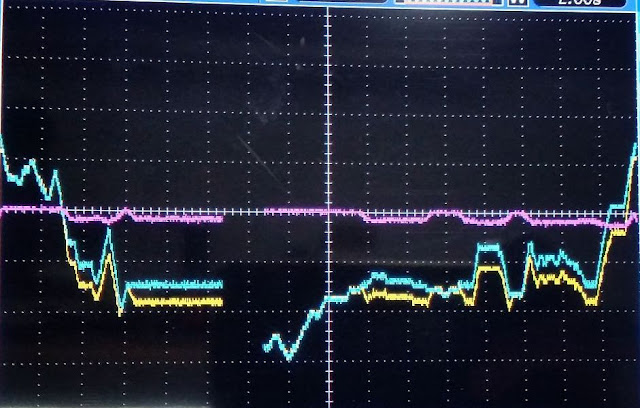

The above shows the response based on various inputs on a 2-second scale. You can just make out the roll-on and roll-off behavior. Light blue is the input, yellow is the output, magenta is the difference of those.

There is slightly more stuff happening than what I just wrote above but the basic concept is the same.

I won't provide any schematic for any those changes and won't respond to queries about absolute values (voltages, delays) - the former would just mean the company would not be able to sell its high-end variants and the latter would mean that anyone could produce a clone without any R&D effort.

You'd have to consider that coming up with such a device involves significant cost in design iterations, car testing, (adequate) support and warranty.

However I would love to see better designs and more car hacking from the community, it seems to always be a lacking topic compared to other beaten-to-death designs.

The design is overly simplistic:

In my opinion the 'range' encoder should actually select some preselected curves instead of providing small useless increments. The whole box could be replaced with a few standard parts: LM317 to provide end point voltage, Zener/LED for start point, opamp to subtract offset.

There is no real bypass and if the unit fails the signal is not forwarded (unaltered) to the car, it just dies.

There is plenty of flash space and power to do some real calculations and interpolations as well as some on-the-fly learning and semi-closed-loop adjustments.

The claims of xx millions operations per second on the product page are white lies, it actually does a few hundred, at best. Plus the opamp has a 1Mhz bandwidth.

A better topology would be a summing buffer amplifier - where one fixed PWM output (2.5V) is subtracted from the signal and another PWM output is added. That way, the 'power' can be increased or decreased (better fuel consumption) and in case of MCU failure the signal is passed unaltered.

http://hackcorrelation.blogspot.de/2016/02/inside-stuff-racechip-car-tuning-thingie.html

First off let me start by saying I consider this fair use, as the instructions and website do not explain what the settings do, how the unit actually functions and what effects it can have. The description below refers to a single-channel Diesel engine (single common rail).

Settings

First off, most of the users will just want the simple explanations, here's a graph that should be in the manual:

The chart above shows 4 possible settings and their effects.

The first rotary encoder controls how much 'extra power' is requested. See 9E vs. BE vs. 4E

The second rotary encoder controls the RPM high range (endpoint). See BE vs B7.

The encoders go from 8 (minimum) through 9, A, B, C, ..., F, 0, 1 ... 7 (maximum).

The second encoder will control when the RaceChip unit will cease intervention: a setting of X8 means at around 30% load (or RPM), X0 at around 60% load, X7 would perform until 100% of the RPM range. Those are just very loose numbers and will vary with every engine type.

The low-range (starting point) cannot be configured, in my case it starts from idle.

Function

The unit will intercept the common rail pressure sensor value and will send a lower value back to the ECU (factory engine chip). This will make the ECU 'think' that the rail pressure has dropped, ordering the fuel pump to increase the pressure. That's what 'demand' or 'power increase' in the charts above means. This can or cannot translate to a real power increase, but it usually does.

The signal from that rail pressure sensor depends on many factors, but usually is proportional to the engine RPM under light driving conditions. Under heavy load or sudden acceleration the ECU will increase the pressure to (almost) 100%.

However, the ECU might order increased or decreased pressure when entering an emission cycle - warming up the engine from cold, heating the catalyst unit, purging the catalyst residue, etc. In this case Race

The first encoder will tell the unit how much to shift the signal. The shift (in voltage) is proportional to the tuning unit will interfere with this and caused increased emissions and/or increased fuel consumption. In my case it increases significantly (20-50%) the idle fuel consumption.

A setting too high will cause the engine light to come on under normal driving conditions.

The second encoder tells sets the maximum RPM or load that the unit should stop interfering. Since the offset applied to the sensor is proportional to the pressure this setting is really tied to the first one. Also, once maximum set RPM is exceeded the unit just delivers the original signal back to the ECU which might cause the latter to see a sudden 10-30% increase in rail pressure.

A setting too high will cause the engine light to come on under heavy acceleration or heavy load.

The unit provides no way of setting the lowest RPM or load or choose another curve that is not linear with sharp drops.

Most of the factory ECUs choose a different engine curve based on the rail pressure sensor so in a way it's very similar to a chip tuning that flashes new engine curves into the ECU, while still not exceeding maximum allowed settings.

Possible effects

An adverse effect might be that running with continued increased rail pressure could cause stress on the fuel lines that was not engineered for. This means that the lines might start leaking on old cars, the high-pressure fuel pump could wear prematurely, injectors could wear prematurely.

I don't see any way it could cause the engine to blow up, as some anecdotal reports have said.

There is a real possibility that the engine room noise will increase - the engine is working harder to make the high-pressure pump work harder. Both are the noisiest things around.

I don't see any way that a routine check will be able to detect that the unit was installed. So it's basically untraceable. However, pressure warnings - especially the ones resulting in a check engine light - might show up in the ECU log. But those can also be caused by lower-grade fuel or have other causes.

I'm more concerned about the sharp intervention of the unit, it's either on or off, there is a bit of hysteresis but no interpolation to factory defaults while outside the range. Since I don't have enough experience with this I would rate this worry as inconclusive.

Also, the fine spikes/dips of the sensor are being filtered out, in newer engines those might be used to optimize the parameters. It essentially means less information is getting through to the ECU which could mean that for example faulty or leaking injectors cannot be detected (if implemented).

Addendum

Now that I know what to search for I've found several sources that discuss the pros and cons of these "diesel tuning boxes".

First read should be a scientific paper that has done all the required tests and yields about the same conclusion as my empirical testing: low-end torque is increased, fuel consumption at city speeds increases, consumption at highway speeds decreases.

http://www.ijesit.com/Volume%202/Issue%201/IJESIT201301_35.pdf

Short article: http://www.sttemtec.com/en/tuning-box/tuning-box.php

And another one: http://www.endtuning.com/dieseltuningboxes/

The latter seems to imply that fuel mileage would be false but in my experience the on-board-computer showed [almost] the same results as the actual measured consumption. As far as I know, that OBC figure is measured with the fuel flow sensors and not derived from injector turn-on time times rail pressure. YMMV (literally) but I think instant consumption is derived (so, false) while long-term consumption is measured.

Emissions are likely worsened, but I haven't seen any real test on that.

Low-level reverse-engineering

This is the meat of the stuff and what my report above is based upon.

First off, a pen-annotated schematic that I've used to trace the connections:

Click to zoom in to see the annotations I'll be referring to.

G is ground, Vcc is supply voltage in (clamped to 3V), Ai+ means the positive input of the A amplifier. YF - yellow female lead, sensor input. YM - yellow male lead, unit output to ECU.

Engineering quality is good, there is plenty of protection which is good for reliability. That is mostly what you pay for in this unit. Feel free to study the image above, I will not go into more details on that.

The supply voltage (red wire, usually 5V) is clamped through the D1 Zener diode to

There is an LM7805 in the upper right corner but I believe it's only used for programming, as it only goes to the CN10 connector in the lower right corner.

The unit can function as a 1-channel device or a 2-channel device, in my case you can see the sticker with the number '1' marked. A 2-channel device will probably have a different firmware, but it might be controlled only by the connector type.

The quad-opamp on the center-left is used in a buffer-amplifier configuration: units A and B buffer the input from the real sensor while C and D buffer the output from the microcontroller,

The [1-channel setup] flow is as follows:

- The sensor signal is coming through a resistor and capacitor network, the fourth group on the top - near 4th 3-pin device (NPN transistor?)

- It goes into amplifier B positive input, the [buffered] output is sent to pin 16 on the MCU (AIN0).

- The MCU measures the signal level and based on a simple lookup table provides a PWM output on pin 18. It seems to be around 3.9Khz.

- The PWM signal is low-pass-filtered through an RC network to turn it into a DC voltage and fed into amplifier C positive input.

- The C output is then sent to the second group on the top and exits to the ECU.

I assume amplifier A would provide input buffering and D the output buffer for the second channel.

There are two leds - green bottom right, red bottom left, don't know what they are used for.

The 'lookup table' that derives the output is nothing more than a linear function - ax+b - where the a parameter (negative) is determined by the first encoder and b is zero (as far as I can see). There is no real memory content or curves stored on the device.

The second encoder controls the maximum sensor input voltage that the unit will stop responding to, within a range of 3.56 to 5.5V. Above that value and below 2.48V the microcontroller will just send the input to the output, unaltered.

Since the input is going through an RC filter there is no transient response - i.e. it does not care how many injectors there are or what they do or what the real engine RPM is.

There is a slight hysteresis implemented, of around 0.1V, but it may be just a side-effect.

At the cut-in and cut-off points, the output signal seems to converge slower - it's either a protection against an abrupt take-over or just a side-effect of the RC network or perhaps I was not loading the output enough.

The above shows the response based on various inputs on a 2-second scale. You can just make out the roll-on and roll-off behavior. Light blue is the input, yellow is the output, magenta is the difference of those.

There is slightly more stuff happening than what I just wrote above but the basic concept is the same.

Future plans?

I really want a way to turn this unit on or off remotely or adjust the settings on the fly. The asking price for the Bluetooth unit is a bit steep (~500E) and fumbling under the hood to test each setting takes a lot of time. Some settings improve engine smoothness at the expense of low-end torque while others provide power but make the car noisy or yield error lights.I won't provide any schematic for any those changes and won't respond to queries about absolute values (voltages, delays) - the former would just mean the company would not be able to sell its high-end variants and the latter would mean that anyone could produce a clone without any R&D effort.

You'd have to consider that coming up with such a device involves significant cost in design iterations, car testing, (adequate) support and warranty.

However I would love to see better designs and more car hacking from the community, it seems to always be a lacking topic compared to other beaten-to-death designs.

The design is overly simplistic:

In my opinion the 'range' encoder should actually select some preselected curves instead of providing small useless increments. The whole box could be replaced with a few standard parts: LM317 to provide end point voltage, Zener/LED for start point, opamp to subtract offset.

There is no real bypass and if the unit fails the signal is not forwarded (unaltered) to the car, it just dies.

There is plenty of flash space and power to do some real calculations and interpolations as well as some on-the-fly learning and semi-closed-loop adjustments.

The claims of xx millions operations per second on the product page are white lies, it actually does a few hundred, at best. Plus the opamp has a 1Mhz bandwidth.

A better topology would be a summing buffer amplifier - where one fixed PWM output (2.5V) is subtracted from the signal and another PWM output is added. That way, the 'power' can be increased or decreased (better fuel consumption) and in case of MCU failure the signal is passed unaltered.

This comment has been removed by the author.

ReplyDeleteI'm hoping you could help me on an issue I am having with my Racechip box.

ReplyDeleteI have had it fitted to my car for about 3 years and now recently the car has started cutting out when at low rpm, (i.e. when approaching a junction slowing down and the engine goes below 1500-2000rpm). I have only noticed the problem after a few hours of driving and only after sitting in heavy traffic which makes me think it could be to do with a DPF regen as you mentioned before. When the car cuts out no engine warning light comes on or error codes and the car restarts as if the car has stalled. Since removing the tuning box the issue has not occurred once. Any ideas or advice would be appreciated.

Thanks

Jonny

The DPF usually has a pressure sensor to signal if it's clogged, it should show up as a fault. In my case, the sensor got bad and I got faults with and without the unit.

DeleteThe Racechip has a setting for setting the maximum RPM but I don't think it has one for the minimum. Either way, this design cannot take RPM into consideration, only fuel presure, so the only advice I can offer is to lower the setting on your unit (first encoder, by 1 click) and see if the behavior improves.

Thank you so much for this post, it really helped me to understand how my RaceChip works. I have a question that I hope you can help me with: What would be a good case scenario in where shifting up or down the second rotary control might be a good idea? As per your explanation, it will set the RPM "working window" in where the chip is meant to work, so past this value it will revert itself to "factory settings" until the RPMs are back in track. As there is no minimum value for this (idle in your case), do shifting up the second control just expands the RPM range instead of moving up like the steps in a ladder? Why would I ever want to move the secondary control from the default? That is something that is not even mentioned in the official documents and videos from RaceChip...

ReplyDeleteThanks a lot, and keep up the good work.

You are right, turning up the second switch will increase the RPM higher range. The most likely reason to do so is to avoid the "check engine" light in case of high loads, e.g. pedal-to-the-metal from a low speed in a high gear. Can also happen while uphill, engine is cold, etc.

DeleteIf you do get the check engine light but still want to keep the RPM range high, you need to decrease the first encoder.

Another way to handle this is to keep the RPM range low, in case you want only low-RPM torque and keep increasing the first encoder until you get engine errors, then dial it down.

To put it in more technical terms, the ECU will likely give out an error if the signal is higher than 4.5-5V, depending on the car, which the "tuning" unit above is able to do, depending on the switch settings.

Can you identify the rpm range for every settings of the second encoder? In your case, you mentioned that X8 was at 30% load will cease intervention, X0 at 60% and X7 at 100%. Can you please complete all the diagram starting from X8 up to X7 with 1 increment only. Example: X8 = 30%, X9 = ?, XA = ? and so forth. Thank you so much and keep up the good work!!

ReplyDeleteHi, thank you for the kind words. I would like to avoid giving out the complete map since that could be considered some kind of intellectual property. Even better, you can easily do something better than that, like what my unit does (detailed in later posts).

DeleteThe unit operates based on input voltage, so xE will stop at ~4.2V, x7 will stop at 5V. Similarly 8x will increase the output voltage by ~9%, Fx by ~17% and you can extrapolate any values from that, which is what they do anyway.

Very informative articles. Thanks for sharing.

ReplyDeleteDyno Tuning